Artificial Intelligence in Autonomous Systems: From Theory to Real-World Deployment

Artificial Intelligence (AI) has become a core enabling technology in modern autonomous systems. From self-driving vehicles to service robots, AI allows machines to perceive their environment, make decisions, and adapt to dynamic conditions without continuous human intervention. In this post, we explore the role of AI in autonomous systems and how it is applied in real-world robotic platforms.

The Role of AI in Robotics

Traditional robotic systems rely heavily on predefined rules and deterministic control logic. While effective in structured environments, these approaches struggle in real-world scenarios where uncertainty, noise, and dynamic obstacles are present. AI addresses these limitations by enabling robots to learn from data and respond intelligently to complex situations.

In autonomous mobile robots, AI is commonly used for:

- Environment perception

- Object detection and classification

- Decision-making and behavior planning

- Adaptive control and optimization

By integrating AI, robots can operate more safely, efficiently, and autonomously in unstructured environments.

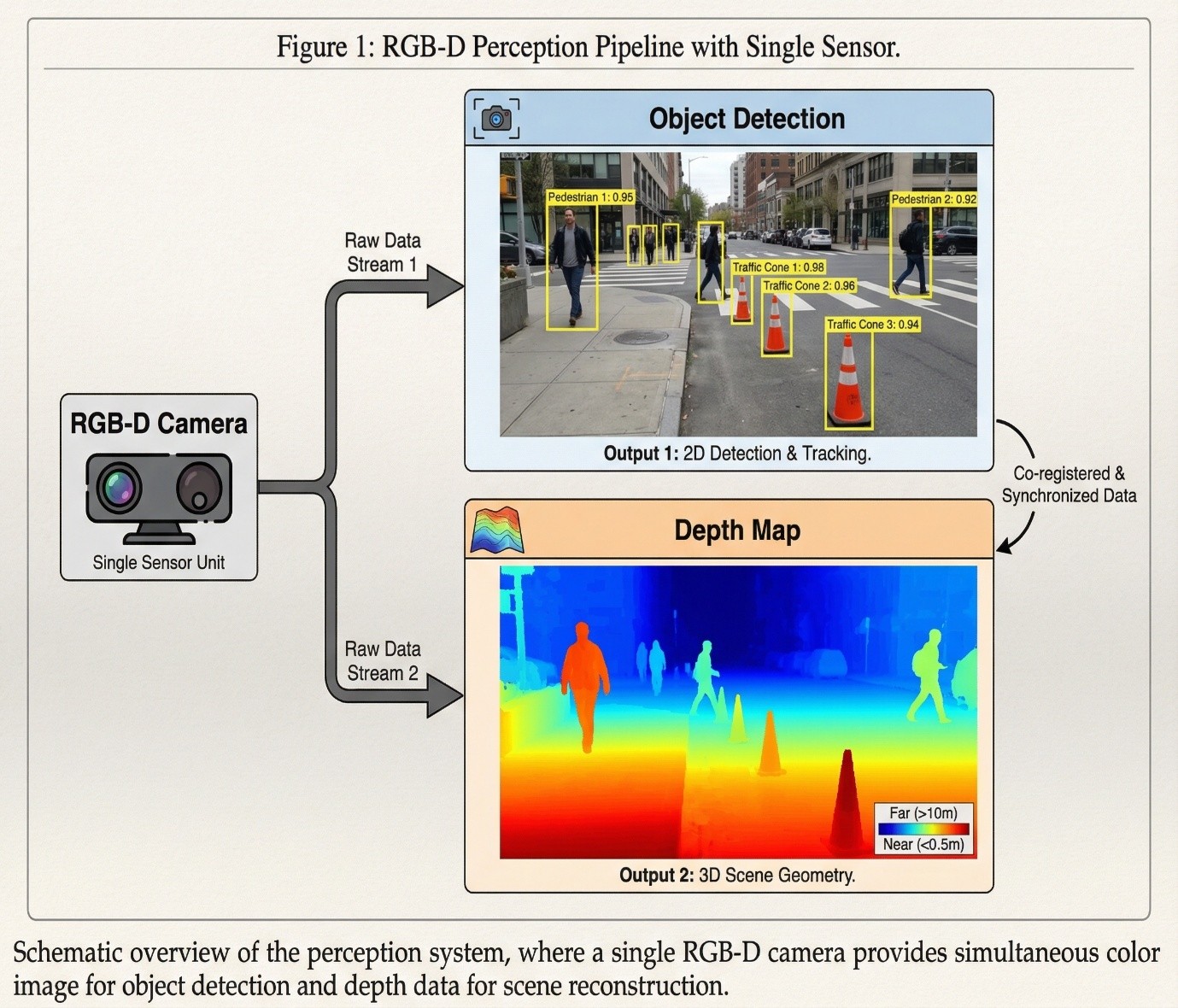

AI-Based Perception

Perception is one of the most critical components of an autonomous system. AI models, particularly deep learning-based computer vision algorithms, allow robots to interpret visual data from cameras and depth sensors. These models can detect obstacles, recognize objects, and estimate spatial relationships in real time.

In our autonomous delivery robot project, AI-based perception enables the system to understand its surroundings and respond to changes such as moving objects or unexpected obstacles. This capability significantly improves navigation safety and reliability.

Edge AI and Real-Time Constraints

Deploying AI on autonomous robots introduces unique challenges. Unlike cloud-based AI systems, mobile robots often operate with limited computational resources and strict real-time requirements. This makes model optimization and efficient inference essential.

Edge AI techniques focus on:

- Lightweight model architectures

- Reduced latency inference

- Energy-efficient computation

By optimizing AI models for edge deployment, autonomous robots can perform intelligent tasks without relying on constant cloud connectivity.

Integration with Control and Middleware

AI does not operate in isolation. In autonomous systems, AI modules must integrate seamlessly with control algorithms, sensor fusion pipelines, and middleware frameworks such as ROS 2. This integration ensures that perception outputs can directly influence navigation, motion planning, and low-level control decisions.

A well-designed architecture allows AI components to enhance system intelligence while maintaining reliability and safety.

The Future of AI in Autonomous Robots

As AI technologies continue to evolve, autonomous systems will become more capable and versatile. Advances in perception, learning-based control, and multi-modal sensor fusion are expected to further improve autonomy in complex environments.

For engineering projects and real-world applications alike, AI represents a powerful tool that bridges the gap between theoretical models and practical autonomous behavior.

Conclusion

Artificial Intelligence is a fundamental pillar of modern autonomous systems. By enabling perception, decision-making, and adaptability, AI transforms robots from rigid machines into intelligent agents capable of operating in real-world environments. Through careful integration, optimization, and validation, AI can be effectively deployed to support safe and reliable autonomy.